A saying I’ve latched onto again lately is that HDR is arguably more important than 4K when it comes to visual quality. It takes a large screen to be able to tell the difference between 4K and 1080p, and even then, there’s a good chance you’re watching upscaled 1080p most of the time. HDR, conversely, is as noticeable on a 42-inch TV as an 82-inch one, since it expands color palettes and makes highlights pop.

While new standards are around the corner, HDR10+ is one of the two leading formats at the moment and isn’t going anywhere. I’m going to dissect its drawbacks mainly in relation to Dolby Vision. For a preview, let’s just say that there’s a good reason HDR10+ Advanced is coming down the pipe.

There’s less content than Dolby Vision

The scales are still balancing

You might be (mildly) surprised to learn that Dolby Vision dates back to 2014, long before many TVs had 4K. The vanilla version of HDR10 only emerged the following year, leaving Vision not just with a headstart and better brand recognition, but a technical advantage — whereas Vision is a dynamic standard that can adjust on a per-frame basis, HDR10 metadata is static for any given movie or show.

HDR10+ was released in 2017 to help close the gap, but nevertheless, you’re more likely to find content mastered for Vision than HDR10+. In fact, Netflix, Disney+, and Hulu only began supporting HDR10+ in 2025, despite the fact that Amazon Prime Video has featured it for over a decade. This means that if you want guaranteed HDR content, you’re better off focusing on Vision support, although it’s increasingly common for TVs and media streamers to include equal hardware compatibility. More on this in a minute.

This discrepancy extends to Blu-ray discs. While some titles do support both Vision and HDR10+, they’re more likely to offer the former if there’s any pressure to pick one over the other. It’s not just that Vision was around first — it’s that it’s a higher priority for mastering, being the more impressive of the two standards.

HDR10+ isn’t as technically capable as Vision

Mostly an issue on the latest and greatest TVs

I’m not particularly demanding when it comes to AV formats. My general opinion is that unless it’s botched, any HDR is better than no HDR. I grew up on VHS tapes and analog broadcast TV, so as far as I’m concerned, arguing that one HDR standard isn’t vibrant or detailed enough is like complaining that your filet mignon needs more pepper.

If I’m going to nitpick, Vision is the technically superior format. For starters, Vision supports up to 12-bit color, whereas HDR10+ is 10-bit. This gives the Vision the edge in accuracy, including better handling of gradients. You’ll need a TV capable of 12-bit color to get the most out of this, mind, and the odds are that you don’t have one. On top of that, many of the colors in the 10- and 12-bit ranges are beyond the human eye’s ability to discern. People just tend to recognize HDR as more colorful.

The impending wave of RGB mini-LED TVs may finally put Vision to the test, since some models should theoretically peak at 8,000 to 10,000 nits.

Vision can additionally handle up to 10,000 nits of brightness, whereas HDR10+ caps out at 4,000 nits. As with 12-bit color, this difference tends to be irrelevant in most situations, since most TVs can’t hit the 4,000 mark yet, and both figures are insanely bright — my Apple Watch Ultra 2 is readable in the midday sun at 3,000 nits. Nevertheless, the impending wave of RGB mini-LED TVs may finally put Vision to the test, since some models should theoretically peak at 8,000 to 10,000 nits.

It’ll be interesting to see how Vision 2 and HDR10+ Advanced play out once they become mainstream, since both are capable of handling more colors and nits than anyone needs. The main difference is that Vision 2 relies heavily on filmmaker control, while Advanced is more AI-based.

Drawing needless battle lines

You’d think that with HDR10+ being royalty-free, device makers would be rushing to support it, hoping that it might take over and kick Dolby to the curb. That seems to be Samsung’s gambit. The company spearheaded the development of the standard, and actively refuses to support Vision on its TVs, no matter if they cost four, five, or six digits.

HDR10+ is conspicuously missing in some other parts of the industry, though, most notably on TVs by LG and Sony, such as the C5 and Bravia 9. Those brands have gone all-in on Vision as their dynamic format, with only two static options — HDR10 and HLG — serving as fallback. Presumably this is because the companies have long supported Vision, and see no need to spend resources on a format that’s technically inferior and less popular.

There’s also a chance that the console or media streamer you’re using doesn’t support HDR10+. The Apple TV 4K didn’t include it until the 2022 model, and it’s missing in action on every game console. Most consoles are liable to only support HDR10, the main exceptions being the Xbox Series X and S, which are equipped with Dolby Vision.

Ironically perhaps, there’s better news in the budget department. You’re going to find HDR10+ on TVs from brands like TCL and Hisense, and even the cheapest Amazon Fire TV streamers support it, which follows — the company wants you hooked on Prime Video. Remember, of course, that you can’t add an HDR format to a TV that’s not already compatible with it.

PC support isn’t automatic

When is the PC world going to catch up?

Another thing you might be shocked to learn is that by default, Windows 11 only supports HDR10. There’s some logic to skipping Dolby Vision, given that Microsoft would have to pay royalties, and other parties (like PC makers) can pay for Vision compatibility if they want it that badly. With HDR10+ being completely free, however, its absence is a little head-scratching.

Really, there’s less incentive to add HDR10+ to PC software than there is to movies and TV shows.

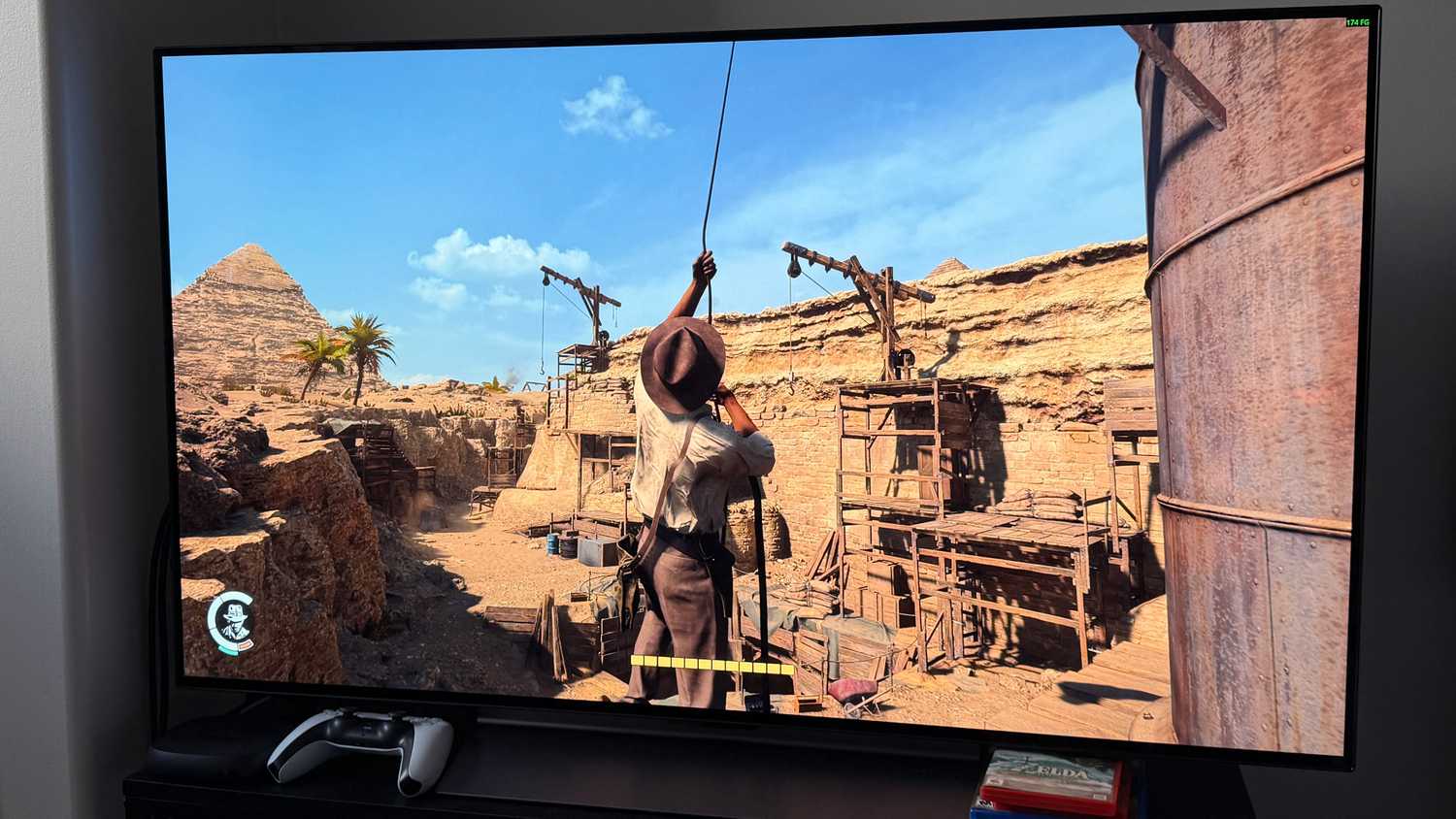

Expect to leap through some hoops if you want to experience HDR10+. Playing local videos is likely to require special software, and you’ll need to doublecheck that your display and GPU are compatible, regardless. As for games, the list of enabled titles is remarkably short. The official HDR10+ website only lists 13, including Battlefield 6, Cyberpunk 2077, and Hell is Us. Dolby Vision compatibility isn’t what it should be either, but it’s still well ahead.

Really, there’s less incentive to add HDR10+ to PC software than there is to movies and TV shows. It complicates rendering, which is the last thing any game developer wants, especially if it adds input lag. Also, it’s possible to approximate it well enough through things like Windows’ Auto HDR feature, which can even bring the tech to games that lack native support. It’s not perfect — driving from a dark tunnel into a sunlit day in Cyberpunk isn’t supposed to be eye-searing — but I’ll take it.