Microsoft is notorious for thinking up a brilliant idea, only to unceremoniously trash it before it has the chance to find its footing in the market (see Windows Subsystem for Android, Windows Mixed Reality, Surface Duo, and any number of other entries listed on the non-official Killed by Microsoft website).

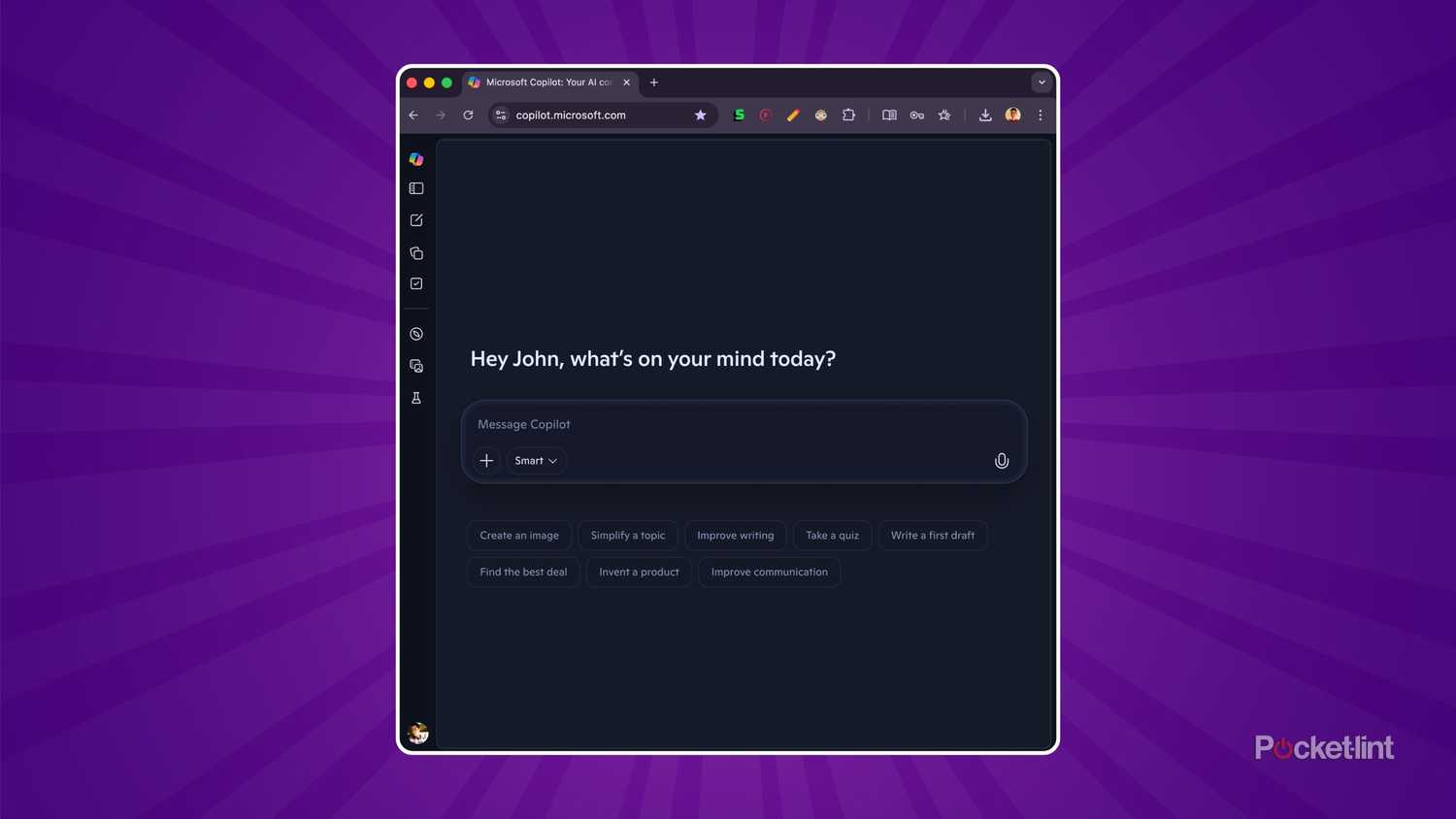

Next in line to receive the boot, and one of Microsoft’s first axings of 2026, is Real Talk — a specialized mode within the company’s Copilot AI chatbot that was designed to disagree and push back against your thought processes, while also providing the option to peek under the covers and see how the technology was doing its under-the-hood thinking.

As confirmed by Windows Latest, Copilot’s Real Talk feature has now been officially pulled, with users no longer able to engage in new conversations with the mode. Additionally, all existing Real Talk conversations have been automatically archived.

“Real Talk was always an experiment. We’ve decided the best path forward is to integrate learnings from the early testing into Copilot more broadly rather than maintain it as a separate feature,” says Microsoft in a statement to Windows Latest.

Real Talk first launched to the public as a US-exclusive feature in January of this year, and it had only begun to roll out more broadly to other markets in recent weeks. Thankfully, it does sound like various components of the Real Talk experience might soon make their way into Copilot proper, which is certainly a silver lining in what is otherwise disappointing news.

Real Talk was a breath of fresh air

I don’t want AI validation, but rather for my ideas to be challenged

One problem I perpetually experience when interacting with AI chatbots built on large language model (LLM) technology is that they hardly ever push back or offer alternative perspectives of consequence. For the most part, I only ever receive affirmations, quips of approval, and other forms of validation that feel contrived and, well, artifical.

When doing research, brainstorming ideas, or simply looking to pass some time by chatting with an AI model, I want to interact with an opinionated, personality-driven chatbot that’s willing to tell me when I’ve missed the mark. I want my AI to tell me things as they are, in the same way a real-life friend or family member that cares about me might do.

I’ve always been on the cynical side when it comes to how much utility LLM-powered chatbots actually bring to the table, as well as how much potential they have for greatness, but I do think there’s room for them to serve a purpose now and into the future. ChatGPT, Gemini, Claude, and other AI assistants can genuinely come in handy for inquiring about a subject, but they’ll never satisfy that human-to-human social connection if they don’t start to fully embrace a Real Talk-like mentality.