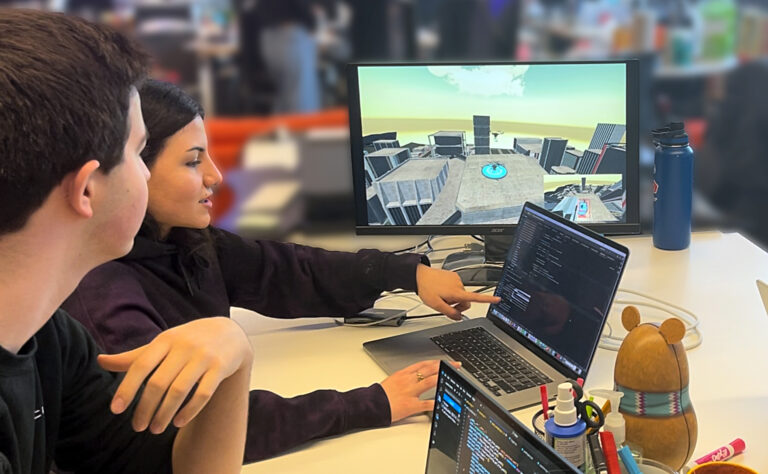

Mya, aged 3, and her mother Vicky playing with an AI toy called Gabbo during an observation at the University of Cambridge’s Faculty of Education

Faculty of Education, University of Cambridge

Even the most cutting-edge AI models are prone to presenting fabrication as fact, dispensing dangerous information and failing to grasp social cues. Despite this, toys equipped with AI that can chat with children are a burgeoning industry.

Some scientists are warning that the devices could be risky and require strict regulation. In the latest study, researchers even observed a 5-year-old telling such a toy “I love you”, to which it replied: “As a friendly reminder, please ensure interactions adhere to the guidelines provided. Let me know how you would like to proceed.” But that’s not to say they should be banished from the toybox altogether.

“There are other areas of life where we do accept a certain degree of risk in children’s play, like the adventure playground – there are risks; children do break their arms,” says Jenny Gibson at the University of Cambridge. “But we’re not banning playgrounds, because they’re learning the physical literacy and the social skills that go along with play. In a similar way for the AI toys, we want to understand: is the risk of perhaps being told something slightly odd now and again greater than the benefit of learning more about AI in the world, or having a toy that supports parent-child interactions, or has cognitive or social emotional benefits? I’d be loath to stop that innovation.”

To understand how these devices communicate with children, Gibson and her colleague Emily Goodacre, also at the University of Cambridge, watched 14 children, under 6 years of age, play with an AI-powered toy called Gabbo, developed by Curio Interactive. Gabbo – a small fluffy robot – was chosen because it was explicitly advertised for this age group.

The pair observed some worrying interactions, finding that the toy misunderstood the children, misread emotions and could not engage in developmentally important types of play. For instance, one child told the toy he felt sad, and it told him not to worry and changed the subject. “When he [Gabbo] doesn’t understand, I get angry,” said another child. The research is published in a report called AI in the Early Years.

Curio Interactive did not respond to New Scientist’s request for comment. But AI-powered toys are also widely available from retailers such as Little Learners – including bears, puppies and robots – which converse with children using ChatGPT. FoloToy offers panda, sunflower and cactus toys that can be used with various large language models, including those from OpenAI, Google and Baidu.

Companies such as Miko offer robots that promise “age-appropriate, moderated AI conversations” for children, without disclosing which company trained the AI model, and claim to have already sold 700,000 units. The firm Luka offers an owl that promises “Human-Like AI with Emotional Interaction”. Little Learners, Miko and Luka all failed to respond to a request for comment.

But Hugo Wu at FoloToy told New Scientist that the company does consider the risks and sees AI as something that can enhance play, rather than replace human conversation and relationships. “Our approach is to ensure that interactions remain safe, age-appropriate and constructive. To achieve this, our systems use intent recognition together with multiple layers of filtering to minimise the possibility of inappropriate or confusing responses,” says Wu. “We have implemented mechanisms such as anti-addiction design features and parental supervision tools to help ensure healthy use within the family environment.”

Carissa Véliz at the University of Oxford, who works on the ethics of AI, says the technology represents a risk and an opportunity. “Most large language models don’t seem safe enough to expose vulnerable populations to them, and young children are one of the most vulnerable populations there are,” she says. “What is especially concerning is that we have no safety standards for them – no supervising authority, no rules. That said, there are some exceptions that show that, with adequate precautions, you can have a safe tool.”

Véliz references a collaboration between the free e-book library Project Gutenberg and Empathy AI in which, for example, you can chat with Alice from Alice in Wonderland. “The model never leaves the realm of the book, only answers questions about the book, like a storybook that only shares adventures and riddles from a book that is appropriate for children,” she says. “There is such a thing as safe AI, but most companies are not responsible enough to build a high-quality product, and without formal guardrails, it’s a buyer-beware area for consumers.”

Gibson says it’s too early to tell what the risks of AI toys could be, or their potential benefits. She and Goodacre stress that generative AI-powered toys need tighter regulation so that toy-makers programme their devices to foster social play and provide appropriate emotional responses. AI-makers should revoke access for toy-makers that don’t act responsibly, says Gibson, and regulators should bring in rules to “ensure children’s psychological safety”. In the meantime, the pair suggests that parents allow children to use such toys only under supervision.

An OpenAI spokesperson told New Scientist that “minors deserve strong protections and we have strict policies that all developers are required to uphold. We do not currently partner with any companies who have AI-powered toys for children in the market.” The UK Government’s Department for Science, Innovation and Technology (DSIT) did not respond to New Scientist’s questions about regulation of AI in childrens’ toys.

The UK government is currently considering other technology legislation designed to keep older children safe online. The UK’s Online Safety Act (OSA) came into force in July 2025, forcing websites to block children from seeing pornography and content that the government deems dangerous. The legislation was intended to make the internet safer, but tech-savvy children can easily sidestep the measures using tools like virtual private network (VPNs) to appear as if they are browsing from other countries without strict rules.

Proposed amendments to a new law introduced by the Department for Education to support children in care and improve the quality of education – the Children’s Wellbeing and Schools Bill – sought to ban children in the UK from using social media and VPNs. Those amendments have now been voted down, but the government has promised to consult on both issues at a later date.

Topics: