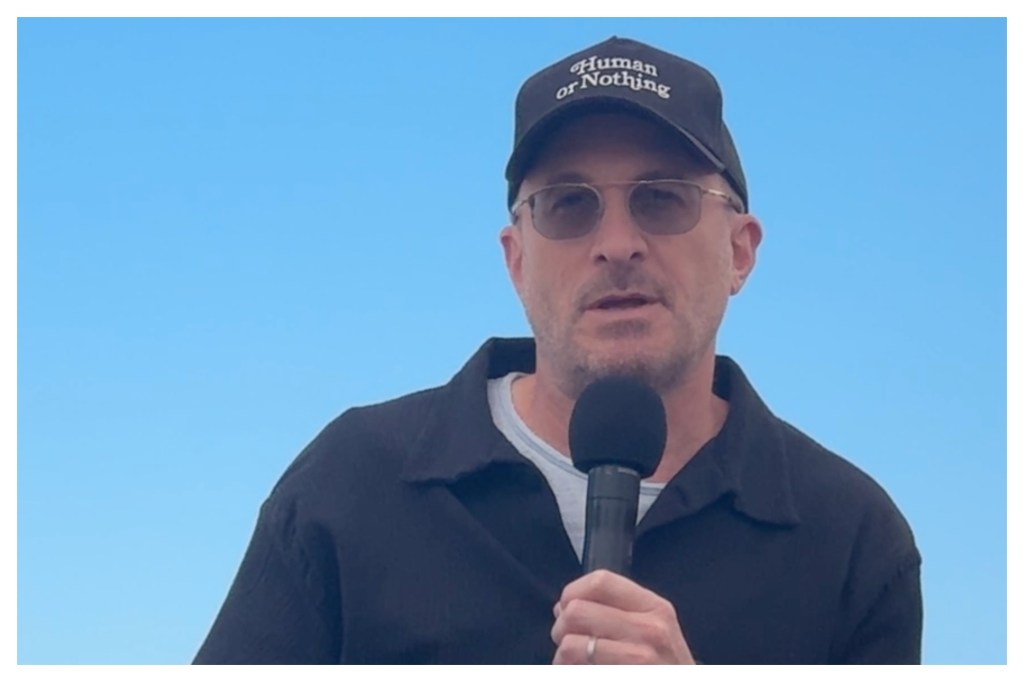

Black Swan and Requiem for a Dream director Darren Aronofsky has revealed he is pushing on with American Revolution-themed AI project On This Day… 1776, the first part of which was widely panned on its release in January.

The director told the AI for Talent Summit in Cannes on Saturday that the spirit of the project, made under the banner of his AI-based studio Primordial Soup, was purely experimental.

He explained he had hit on the idea of the project in November 2025 in the lead-up to the 250th anniversary of the Declaration of Independence on July 4, 1776.

“I was like could we make 30, 35 five-minute-long films about what happened on this day 250 years ago. It was always seen as an experiment,” he said.

He said the quality of the AI-driven work had come on leaps and bounds since the release of the first episode at the beginning for year, which was widely slammed by Aronofsky fans.

“If you look at the first release we did in January, and then you compare it to the project we just released on April 29, it’s mind blowing. It’s not just the models. It’s also Primordial Soup’s pipeline, and it’s also the artists we’re working with who are getting better,” he said.

“I encourage you to watch it, because it’s an experiment to see how it’s going to progress, and we’re 1/4 of the year done and by December 24 when George Washington is crossing the Delaware, it’s going to be a whole another level of what is possible.”

Aronofsky was talking in conversation with James Manyika, President, Research, Labs, Technology & Society at Google on the second day of the Cannes Marché du Film’s AI for Talent Summit.

His company Primordial Soup is making its Cannes Official Selection debut this year with the short film Goodnight Lamby by Dustin Yellin, which plays in Cannes Classics on Tuesday.

He told Manyika that his curiosity in AI as a filmmaking tool had first been piqued by images coming out of generative AI App Midjourney in 2023.

“I was just blown away because I instantly realized that it was going to change everything that we do as filmmakers, it was going to have a huge impact. I wasn’t really sure what it would be, I formed Primordial Soup because I wanted to be ready to somehow get involved,” he said.

Goodnight Lamby is the fruit of a joint creative initiative between Primordial Soup and Google DeepMind to make three short films combining live action with AI.

They also include Eliza McNitt’s Ancestra, which played at Tribeca, and documentary Love Rendered by Dan Cogan and Liz Garbus, which is in post-production.

“What’s been an amazing thing about it, and to cut to the chase, because there’s so much fear out there about this, none of these movies, at least the ones that we’re originating, would exist without this technology. Meaning they’re not replacing anything, they’re purely additive,” said Aronofsky.

He dismissed “the myth” that AI-supported works are simply the product of putting the right prompts into an AI App.

“I’m sure Google would love that to be the reality… but that technology is so far away and such a science fiction idea… it takes a tremendous amount of human labor and artistry and intention to make anything good with this technology,” he said.

Aronofsky recounted how AI had been used to generate the image of an newborn child in Ancestra, which is inspired by the director McNitt’s own traumatic birth story.

“When she was born, she almost died because she was born with a hole in her heart. She wanted to recreate this to honor her mother. For any filmmaker, shooting with a newborn baby causes many, many problems,” he said.

“There’s the deeply ethical issues of bringing a newborn baby to set,” he said, adding jokingly, “and do you actually want to be hanging out with parents who are willing to bring their newborn baby to a set… in a couple of days we were able to take whatever the actress was holding and turn it into a live baby, so it just solved a lot of problems.”

Aronofsky acknowledged fears over the impact of AI on creative industry jobs and human creativity, but suggested that ultimately the technology would liberate artists across all mediums.

He pointed to Yellin’s Cannes Classics-selected Goodnight, Lamby, featuring Chris Rock and Paul Rudd in the voice cast, as an example of this. The work taps into the multidisciplinary artist Yellin’s sculptures featuring layers of glass and collage work.

“For years he’s been trying to bring it to life, but the kind of effort and economy that it would take to bring something like that to life is incredibly challenging… this technology unlocked an incredible way to get it done. It’s basically about Dustin and his daughter. His daughter is playing his daughter, but leave it to Dustin to get Paul Rudd to play him and then add Chris Rock.”

“Basically his daughter enters one of his sculptures and becomes a character in the sculpture. It’s just incredibly fun, beautiful, he said suggesting it was in the tradition of Alice in Wonderland, or The Red Balloon.

“I’m excited Cannes is leaning forward with these technologies, because once again, this is a film that would not have existed without this technology. They agreed to show it, I had a feeling, because of the tradition of The Red Balloon, and those types of movies here at this festival.”

He reveals that Love Rendered goes behind the scenes of a collaboration between Primordial Soup and Google DeepMind to try to create technology to help people who are losing their memories later in life by recreating them.

“There’s so much push back against it, and I’d just love to talk about that the word AI for a second, as for AI is a terrible word. It’s a catchphrase for so many different things, and I think that the thing we deal with when we’re talking to Chat GPT about what the weather is, or what we’re going to do this day, or how we’re going to spend three days in Cannes, is very different than the AI we’re using when we generate images,” he said.

“It’s not impersonating a person, it’s actually a tool, and it’s very much in the tradition of the evolution of filmmaking. From when sound was first introduced, there was incredible pushback from all the people playing usable instruments. When the portable camera came, we suddenly got films like Breathless and the French New Wave. When VFX came, that unlocked the superhero film,” he said.

“It’s going to change a lot. It’s not necessarily replacing, and arguably there will be many additive elements to it.”

Aronofsky added that if had been an emerging filmmaker in his 20s today he would be experimenting with AI but he added that he did not believe that the technology would ultimately replace the cinema.

“Guillermo del Toro, Leonardo DiCaprio movies at IMAX will always exist, and people will always want to go see those movies, and I will continue to make movies like that. All that type of filmmaking will always exist… it’s not one or the other.”

He also challenged notions that AI will replace human storytellers and directors, suggesting rather it could liberate artists, pointing to the example of Orson Welles.

“It’s not anywhere in our lifetimes, no matter how much time a computer is going to spend trying to figure out how to tell a human story, it’s just such a huge effort beyond any single person on the planet telling a story, so that’s not really the danger,” he said.

“It may actually be even easier to tell stories… Orson Welles being the perfect example of many years did that genius waste trying to raise money to do his art or fight for his cut, versus if Orson Welles could just sit there and be wherever his creative mind wanted to go create. That’s the exciting part of storytelling. I think storytellers more than ever will have an easier time to tell stories.”